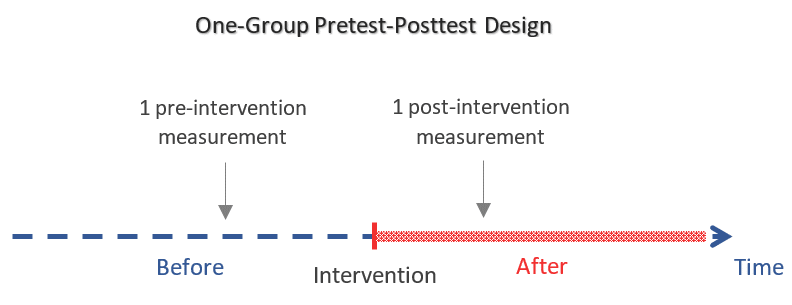

The one-group pretest-posttest design is a type of quasi-experiment in which the outcome of interest is measured 2 times: once before and once after exposing a non-random group of participants to a certain intervention/treatment.

The objective is to evaluate the effect of that intervention which can be:

- A training program

- A policy change

- A medical treatment, etc.

The one-group pretest-posttest design has 3 major characteristics:

- The group of participants who receives the intervention is selected in a non-random way — which makes it a quasi-experimental design.

- The absence of a control group against which the outcome can be compared.

- The effect of the intervention is measured by comparing the pre- and post-intervention measurements (the null hypothesis is that the intervention has no effect, i.e. the 2 measurements are equal).

Advantages of the one-group pretest-posttest design

1. Feasible when random assignment of participants is considered unethical

Random assignment of participants is considered unethical when the intervention is believed to be harmful (for example exposing people to smoking or dangerous chemicals) or on the contrary when it is believed to be so beneficial that it would be malevolent not to offer it to all participants (for example a groundbreaking treatment or medical operation).

2. Feasible when randomization is impractical

In some cases, where the intervention acts on a group of people at a given location, it becomes difficult to adequately randomize subjects (eg. an intervention that reduces pollution in a given area).

3. Requires fewer resources than most designs

The one-group pretest-posttest design does not require a large sample size nor a high cost to account for the follow-up of a control group.

4. No temporality issue

Since the outcome is measured after the intervention, we can be certain that it occurred after it, which is important for inferring a causal relationship between the two.

The one-group pretest-posttest design is an improvement over the one-group posttest only design as it adds a pretest measurement against which we can estimate the effect of the intervention. However, it has some major limitations which will be our next topic.

Limitations of the one-group pretest-posttest design

This design uses the outcome of the pretest to judge what might have happened if the intervention had not been implemented. The problem with this approach is that the difference between the outcome of the pretest and the posttest might be due to factors other than the intervention.

Here is a list of factors that can bias a one-group pretest-posttest study:

1. History

History refers to events (other than the intervention) that take place in time between the pretest and posttest and can affect the outcome of the posttest. The longer the time lapse is between the pretest and the posttest, the higher the risk is for history to bias the study.

Example: A commercial to help people quit smoking — the intervention — may be implemented at the same time as a new warning for cigarette packs — a co-occurring event.

2. Maturation

Maturation refers to things that vary naturally with time such as: seasonality effects, psychological factors that may change with time, worsening or improvement of a disease or condition with time, etc. These can bias the study if they affect the outcome of the posttest.

Example: People may feel overwhelmed after starting a new job, then calm down as time passes. So a one-group pretest posttest study targeting people on their first week at work may be under the influence of maturation due to the participants’ varying levels of stress.

Maturation affects the study through factors that occur naturally with time, such as biological cycles or psychological changes that happen without external influence.

History affects the study through events that are not natural nor usual and are implemented around the same time as the intervention.

3. Testing

The testing effect is the influence of the pretest itself on the outcome of the posttest. This happens when just taking the pretest increases the experience, knowledge, or awareness of participants which changes their posttest results (this change will occur irrespective of the intervention).

Example: As one takes more IQ tests, the person becomes trained to think in a way that makes them do better on subsequent IQ tests. So, when studying the effect of a certain intervention on IQ, a pretest IQ score cannot be directly compared to a posttest IQ score as the effect of the intervention on the IQ score will be biased by the effect of testing.

Another example is when asking people about their hygiene in a pretest makes them more attentive about their hygiene and therefore affects posttest results.

4. Instrumentation

Instrumentation effect refers to changes in the measuring instrument that may account for the observed difference between pretest and posttest results. Note that sometimes the measuring instrument is the researchers themselves who are recording the outcome.

Example: Fatigue, loss of interest, or instead an increase in measuring skills of the researcher between pre- and posttest may introduce instrumentation bias.

5. Differential loss to follow-up

Loss to follow-up constitutes a problem if the group of participants who quit the study (i.e. those who did the pretest and quit before they were assessed on the posttest) differ from those who stayed until the study was over – i.e. the loss to follow-up is not random.

Example: If some participants who took the pretest were discouraged by its outcome and left the study before reaching the posttest, then the study might get biased toward proving that the intervention is better than it actually is.

6. Regression to the mean

Regression to the mean happens when the study group is selected because of its unusual scoring on a pretest (either unusually high or unusually low score), because on a subsequent test (i.e. the posttest), we would expect the scores to regress naturally toward the mean.

Example: Imagine asking a group of people “how much money did you spend today on shopping?”, selecting the top 10 who spent the most, and summing up their expenditures. If we asked the same question to those 10 people again after some time, then almost certainly the sum spent on shopping the second time will be lower. This is because unusual behavior/scoring is hard to sustain.

How to deal with these limitations?

In general, we would be more confident that the observed effect is only due to the intervention if:

- The study conditions were under control.

- Participants were isolated from the outside world.

- The time interval between pretest and posttest was short.

More specifically, in order to reduce the effect of maturation and regression to the mean, we can add another pretest measure.

Example of a study that used the one-group pretest-posttest design

Kimport & Hartzell conducted a one-group pretest-posttest quasi-experiment to study the effect of clay work (as an art therapy) on reducing the anxiety of 49 psychiatric inpatient volunteers.

Pretest, posttest, and intervention

In order to measure anxiety, a self-report questionnaire was used as a pretest and posttest. The intervention was the creation of a clay pinch pot.

Results

There was a statistically significant decrease in the anxiety score between the pretest and the posttest from 46.8 to 39.3.

Limitations

The limitations mentioned in the study were co-occurring treatments/explanations that can bias the study (i.e. only History effects):

- The intervention might have been effective in reducing anxiety by providing a simple distraction or a new experience for these patients.

- The group-talk between participants may have been a biasing factor.

- The personality of the researcher administrating the intervention may have played a role in reducing anxiety.

References

- Reichardt CS. Quasi-Experimentation: A Guide to Design and Analysis. The Guilford Press; 2019.

- Campbell DT, Stanley JC. Experimental and Quasi-Experimental Designs for Research. Wadsworth; 1963.

- Shadish WR, Cook TD, Campbell DT. Experimental and Quasi-Experimental Designs for Generalized Causal Inference. 2nd edition. Cengage Learning; 2001.